This is a series of articles originally published at Fabbaloo

Prologue

Part 1 — A bit of history

Part 2 — The technology

Part 3 — Voxels to the rescue

Part 4 — Implicts

Part 5 — All you need is a few functions

Let’s define what Computational Engineering is and how it differs from the way we currently use computers to design physical parts.

If you go back, one hundred, a thousand years, engineering was done with pen and paper. An engineer sat down with all their knowledge about a technical challenge, and then sketched a drawing. Using this sketch, someone was able to build the object that the engineer described. Over the centuries, the process was refined, the tools became better, and the methodology more and more sophisticated. Up until the 1990s, you could see draftsmen sketching complex machines on their drafting tables.

We flew to the moon using slide rules and paper plans. Its hard not to be impressed by the geniuses, who kept the visual aspects of their designs in their heads, while they methodically put it on paper.

A drafting table — Image Wikipedia

Obviously, this way of creating designs was very laborious. Iterating was hard, because you constantly had to redraw everything from scratch. So it is amazing that some of the most advanced machines, think of an SR-71 Blackbird or a Saturn V rocket, were created using this paradigm.

Computer Aided Design (CAD)

With the advent of computers, engineers started to digitize this process. Early attempts date back to the 1960s, but momentum really started to build in the 1980s. With the assistance of sophisticated software programs, engineers were able to draw their blueprints more easily, on a computer screen. These were the beginnings of Computer Aided Design, or simply CAD. But it is important to note what CAD is. It’s a tool that aids you in the visual creation of your design. At first these tools worked in two dimensions (like the paper they replaced), later, as computers’ capabilities increased, they allowed you to sketch in 3D.

But this was also arguably the last fundamental innovation in CAD: Allowing you to draw objects in three dimensions, taking out the guesswork of imagining the spatial aspects of your design. To this day, CAD is what engineers use. All CAD apps are descendants of these early software packages, and all of them share the same visual sketching paradigm — a paradigm that dates back to humanity’s earliest engineers. We use the same process that people used in the stone age, when they visually described a plan to build something!

3D product design using CAD — Image Source Unsplash

Limitations of a visual metaphor

CAD applications have come a long way. They give engineers unprecedented flexibility and tools that ease their daily workload. CAD also allows for parametrization of geometries, but anyone who used this feature extensively, understands the issues. There simply are limits to the flexibility you can encode into one visual drawing. You might be able to change a few dimensions within reason, but the logic of the sketch, which relies on the dependencies of the drawing process, is very fragile and breaks easily. CAD was built to aid you in sketching an object. CAD doesn’t “understand” what you are building, and cannot fix the drawing for you.

This is your job, it’s all in your head.

So, even though engineers are using a computer, their work is incredibly manual and repetitive. Some engineers are known to have worked on the same object for years, because every iteration essentially required them to start over, whenever there was a change request. — Which takes time, which in turn requires money, which means you avoid it. Which translates into “don’t change anything unless you want to go through much pain”.

Not a recipe for innovation.

A computational approach

Whenever we see something repetitive, we should immediately think of software algorithms.

What engineers do in their heads, is essentially algorithmic, using all their expertise to methodically sketch a three-dimensional object. They have years of experience. They know the requirements. They have seen previous objects and weigh their pros and cons. They have an understanding of the manufacturing process, and take it into account.

While processing this knowledge, they push the buttons and drag their mouses, and produce a visual part in 3D.

But could this algorithm not also be implemented in software?

Instead of engineers executing the process inside their heads and clicking buttons manually, could an algorithm not run inside of the computer, and generate the result directly?

This is not anything new really. We have seen procedural algorithms used in computer games to generate fantastic “infinite” landscapes full complex buildings and detail. But interestingly enough, it is practically unheard-of in engineering.

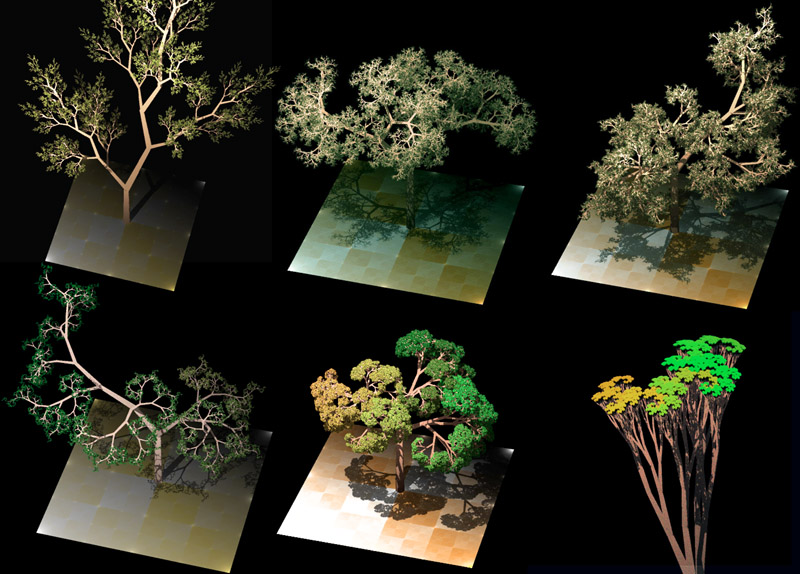

Procedurally generated tree structures, using L-Systems. Source Wikipedia

It’s much more common in architecture, where the complexity of buildings from architects like Zaha Hadid required teams to come up with a paradigm, that automates the creation of some details of their construction.

But engineers are still stuck with CAD.

It’s time for a change and move to a computational approach, where the drawing pen is held by an algorithm, and not directed by an engineer’s mouse.

3D Printing as a catalyst

Arguably the advent of industrial 3D Printing was a key moment in the movement to computerize the engineering paradigm. While traditional production methods require us to know a lot about the details of manufacturing, with many rules, and different ways of doing the same thing, a 3D Printer essentially fabricates anything that you throw at it, as along as it conforms to a few simple constraints.

Additionally, 3D Printers allowed us, for the first time, to produce objects with intricate details, like lattice structures, that are easy to print, but complex to design.

MakerBot Replicator 2 Desktop 3D Printer in University of Toronto Scarborough’s library — Source Wikipedia

Like many, I got interested in 3D Printing at the height of the Makerbot craze. New software companies, like nTopology, started to appear, laying the groundwork for what became “Design for Additive Manufacturing” (DfAM). At their core was a new way to think about geometry. There was no longer a “blueprint” or a sketch of a surface. Instead, these packages started to see a part as a field of matter. In this paradigm, objects are formulas instead of manually placed and meticulously fitted surface patches.

As a result, things like sophisticated infills and complex structures became more commonplace.

I realized that digital manufacturing methods had the potential to completely change how we build physical things. Which had the potential to make the world a lot less boring, and put us on a trajectory to the inspiring future that I had always taken for granted.

So, I left Adobe, which that had acquired my previous company IRIDAS in 2014, to look closely into this new field that was opening. After much research, I created a team around my co-founder Michael Gallo and we went to work.

We set out to explore a new paradigm in engineering, with the clear initial target of 3D Printing. After a lot of back and forth, thinking of the best way to approach this, we decided on a name, Hyperganic (beyond the organic world), incorporated, set up our website with a tongue-in-cheek teaser trailer.

It was also clear, we had to build a completely new technology stack, because nothing we saw out there, supported what we wanted to do. We looked at everything, and it was clear, we had to start from scratch.

In the next article, I will discuss the technologies needed to build a computational approach to engineering, some of the choices we made, and how I see the technology stack today.